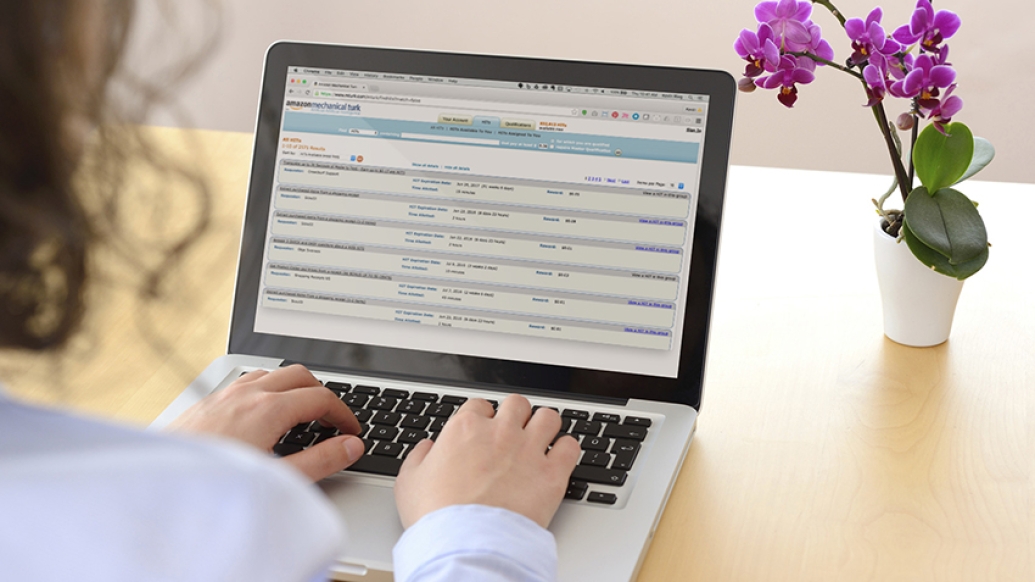

A new journal article explains how crowdsourcing platforms, such as Amazon Mechanical Turk, can help find research subjects, even from rare populations.

7:00 AM

Author |

Finding participants for research studies is typically a tedious and slow-moving task.

So what if there were a new way to find participants that was inexpensive and time saving?

Enter: crowdsourcing.

SEE ALSO: Artificial Placenta Holds Promise for Extremely Premature Infants

A new article in the Annual Review of Clinical Psychology explains why crowdsourcing platforms, which allow for distribution of tasks to many individuals by means of a flexible, open call, may be a viable way to collect data for clinical research.

"The social sciences have moved toward using online research methods and paid subject pools, including crowdsourcing," says Danielle Shapiro, Ph.D., assistant professor of physical medicine and rehabilitation at the University of Michigan and co-author of the article. "These online methods allow you to collect data easily and cheaply, but nobody had really introduced it to the clinical sciences yet."

Shapiro explains that traditionally researchers collect data the old-fashioned way, by going out on their own and trying to recruit people for studies.

"That has a lot of drawbacks. It's expensive, difficult, slow, and it's not obvious if it produces better outcomes," she says. "We decided to investigate whether the crowdsourcing site Amazon Mechanical Turk (MTurk) is a valid way to collect data for clinical research."

MTurk allows researchers to find people willing to complete small tasks (called "human intelligence tasks"). The researcher acts as the "requester" and can pay individuals, or "workers," to complete tasks on the website.

"I think most people think of crowdsourcing sites as a way to answer really simple or mundane questions," Shapiro says. "But it's interesting that you can use them to accomplish something as complex as a multiwave intervention study."

What researchers need to know before crowdsourcing

Shapiro and her colleague provide fellow researchers with key takeaways on why sites such as MTurk can be used for research purposes, as long as researchers keep in mind how the study samples are derived. These considerations include:

-

Researchers should realize that MTurk samples differ from the population as a whole. These samples are younger, more liberal and more educated. They also include more whites and Asians, and clinical psychologists should note that the MTurk samples have a unique profile of clinically relevant symptoms, such as elevated levels of social anxiety.

-

Individual samples on MTurk are only the product of the pool of workers available at that time; thus, studies may have differing demographics and characteristics of participants depending on the time of day.

-

Workers in MTurk are typically as honest as participants in other studies in terms of self-reported demographic and psychological traits, but they may become deceitful when financially incentivized.

-

When recruiting online, participants are likely to have participated in other experiments and this may affect their responding.

Shapiro also adds that benchmarking studies are needed to see how MTurk research findings compare with those of other online recruiting sites.

SEE ALSO: How 3-D Printed Devices Saved Three Babies' Lives

Overall, though, MTurk and similar sites have potential, even with their limitations.

"Clinical researchers, relative to the other sciences, have been more suspicious of internet research techniques," Shapiro says. "In general, we show they are useful tools and, like everything, they have limitations."

She adds, "We're in this wave of taking advantage of new technologies and using internet-based communities to our advantage. There's a sense that, as clinical researchers, we are missing out on a viable research method that we could capitalize on."

Explore a variety of healthcare news & stories by visiting the Health Lab home page for more articles.

Department of Communication at Michigan Medicine

Want top health & research news weekly? Sign up for Health Lab’s newsletters today!